How about more insights? Check out the video on this topic.

Automating Data Recovery in Kubernetes with Nova

Ensuring data availability and minimizing downtime are crucial aspects of any robust IT infrastructure. In the realm of Kubernetes, managing disaster recovery can become complex, especially in multi-cluster environments. This blog post explores how to achieve a fully automated disaster recovery strategy using Nova, a powerful multi-cluster orchestrator from Elotl.

In this first part, we’ll hear from Selvi Kadirvel, Tech Lead Engineering Manager at Elotl. Selvi brings extensive experience in Kubernetes management, having spearheaded the development of tools like Luna (an intelligent cluster autoscaler) and Nova. She’ll guide us through the benefits of automating data recovery with Nova and its unique capabilities within a Kubernetes landscape.

Stay tuned for part two, where Maciek Urbanski, a Platform Engineer at Elotl, will showcase a live demo of Nova's disaster recovery features in action!

Kubernetes for Stateful Workloads

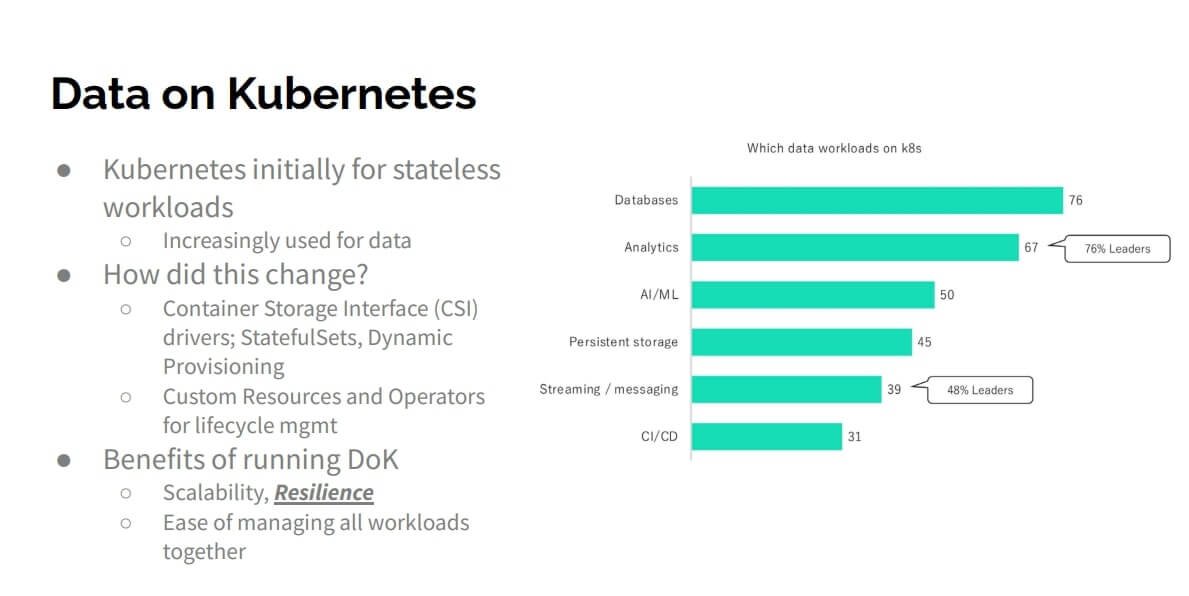

Kubernetes, initially designed for stateless applications, is finding its way into the world of data management. This includes big data analytics, machine learning workloads, and databases, as evidenced by the Data on Kubernetes Community Report (late 2022). The report highlights several databases as popular choices for migration to Kubernetes.

Factors Driving Database Adoption on Kubernetes

Several advancements have contributed to the increased adoption of databases on Kubernetes. These include:

- Improved Container Storage Interface (CSI): CSI is a driver that integrates with your cluster and communicates with various storage providers. The community currently offers over 100 CSI drivers.

- Enhanced StatefulSets and Dynamic Provisioning: StatefulSets allow managing the lifecycle of stateful applications, while dynamic provisioning automatically creates persistent volumes for your applications at runtime.

- Custom Resources and Operators: Custom resources extend the Kubernetes API through operators. Operators play a crucial role in managing the entire lifecycle of database workloads on Kubernetes, including backups, snapshots, and configuration changes.

Benefits of Running Databases on Kubernetes

The primary benefits of running databases on Kubernetes mirror those of stateless workloads: scalability and resilience. Additionally, merging databases and Kubernetes infrastructure simplifies operational management for platform engineers and operators. The Data on Kubernetes Communities report explores this aspect in more detail, investigating whether the move to Kubernetes solely reduces operational complexity or offers tangible business benefits.

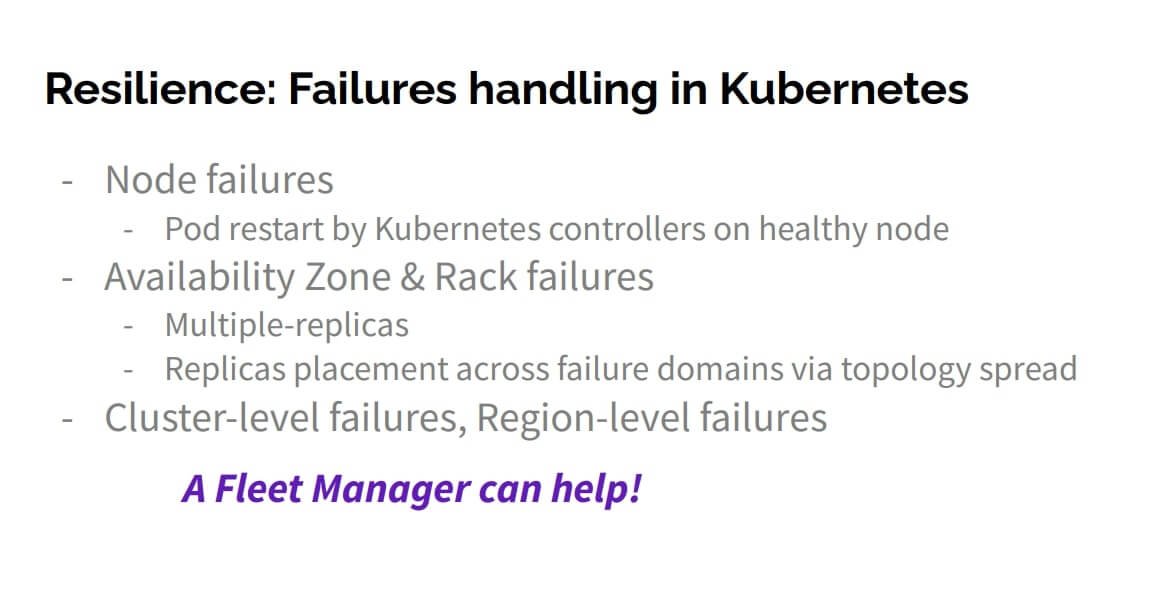

Failures in Kubernetes

Now, let’s move on to how failures are being handled in Kubernetes. When you deploy your workloads across nodes in your data center, simple node failures are automatically handled by Pod controllers within Kubernetes. These controllers simply restart your pod on another healthy node, ensuring seamless operation from an application user’s perspective.

However, what happens during larger failures? Here’s how Kubernetes handles them natively:

- Rack Failures: For slightly larger failures, such as an entire rack of nodes going down, Kubernetes utilizes replicas. You define your workload manifest to include multiple replicas placed across different failure domains, like racks or availability zones (AZs). For instance, to tolerate a single rack failure, you’d deploy at least three replicas ideally across three different AZs.

- Cluster Failures: But what happens during even larger failures, like an entire Kubernetes cluster becoming inaccessible? Kubernetes control plane components, including schedulers, control managers, etc. are susceptible to failure. Additionally, region-level outages, though rare, can occur due to cloud providers throttling performance during seasonal fluctuations.

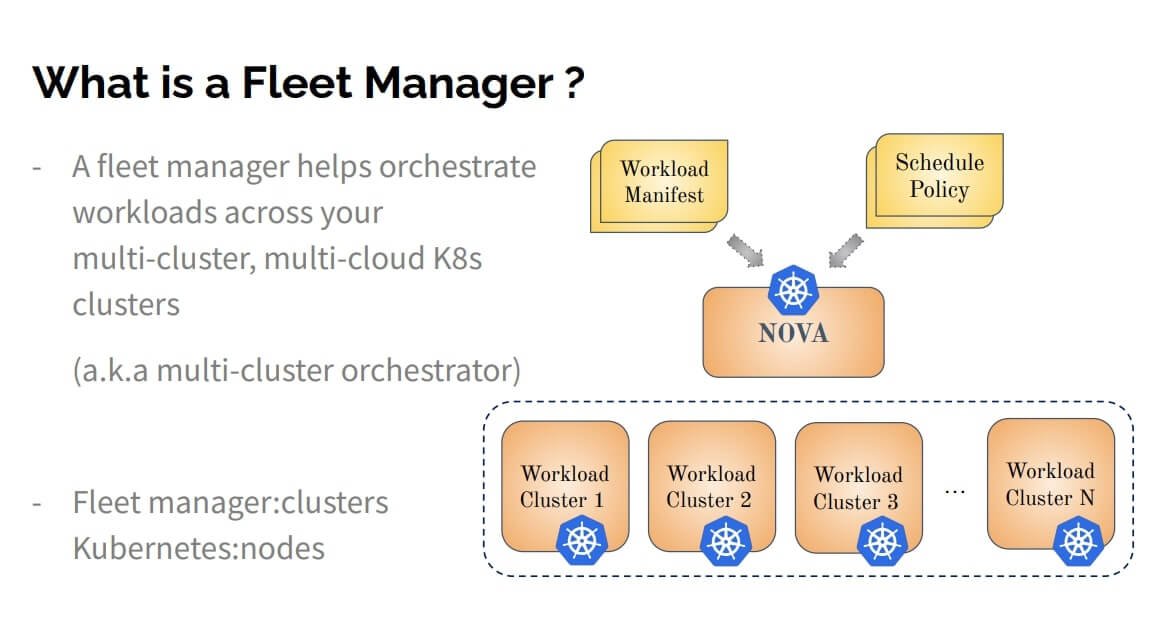

Introducing Fleet Managers

This is where a fleet manager comes into play. A fleet manager orchestrates your workloads across a multi-cluster, multi-cloud Kubernetes environment. This means it can manage clusters across various platforms, including public clouds, private clouds, and on-premise deployments, as long as they are related to Kubernetes clusters.

Nova, from Elotl, is one such fleet manager. However, other options exist, such as Karmada from Huawei Cloud, KCP from Red Hat, and Cellar. To understand fleet managers better, think of them as managing groups of clusters similarly to how Kubernetes manages groups of nodes.

Here’s how Nova simplifies disaster recovery for Kubernetes:

- Centralized Management: Nova is installed within a Kubernetes management plane, abstracting away the complexities of individual clusters.

- Seamless User Experience: Users interact with Nova using the familiar Kubernetes API, requiring no changes to workload manifests.

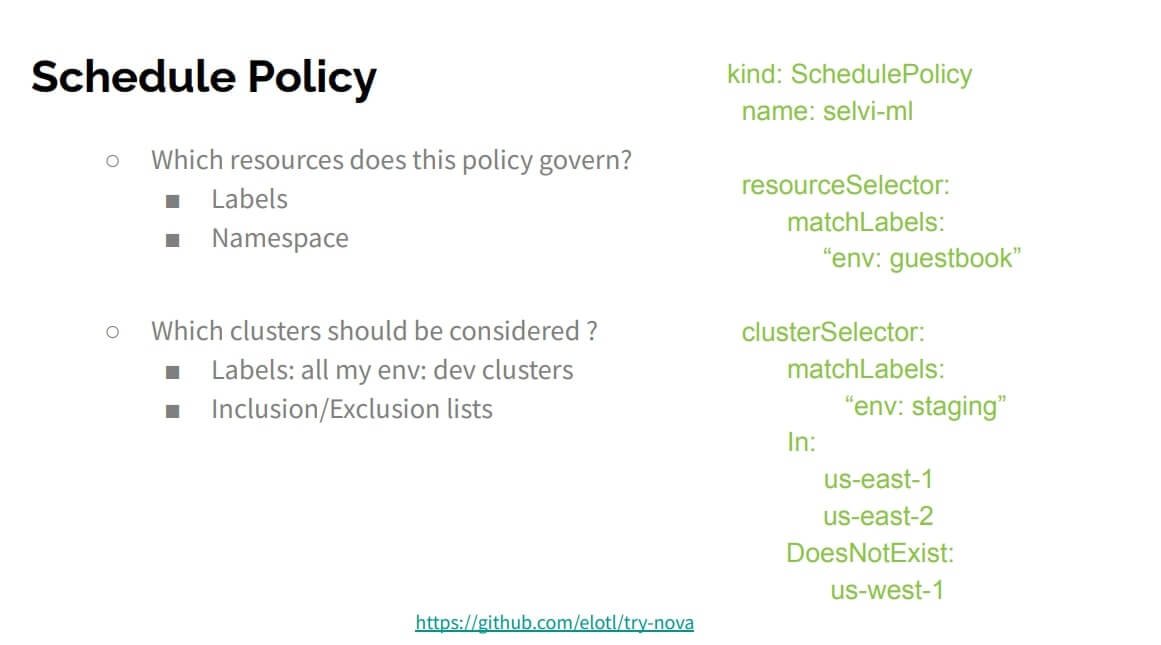

- Scheduling Policies: The only additional element is a schedule policy, a custom Kubernetes resource that defines which workloads are deployed to specific clusters. Let’s delve into how you would specify this in a schedule policy.

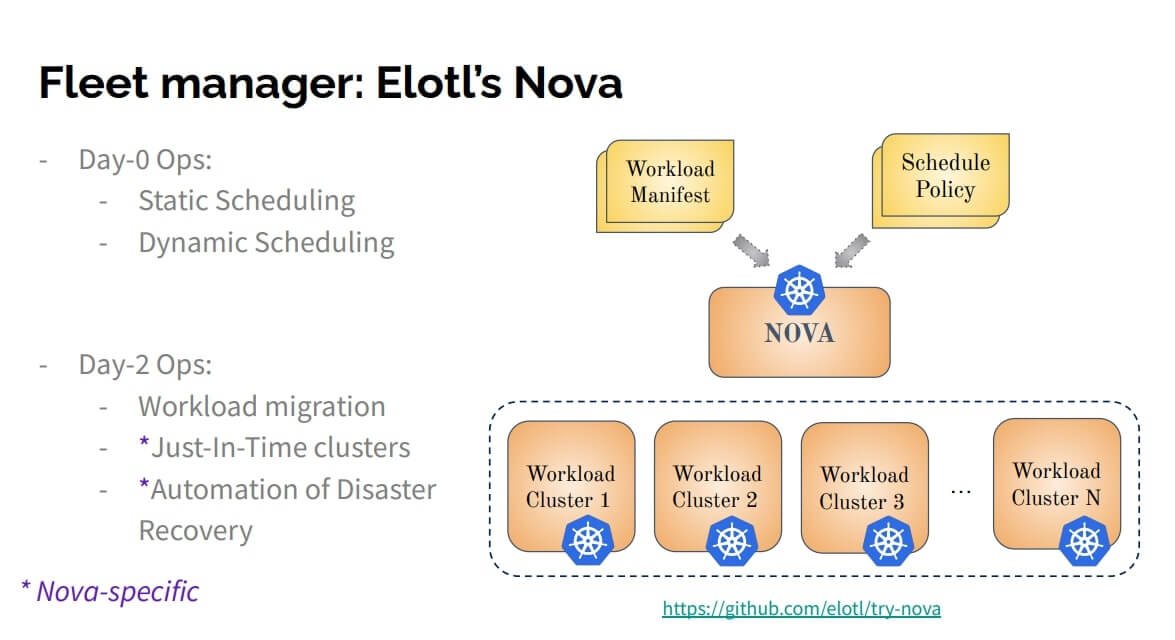

Nova’s Day Zero and Day Two Operations

Before diving into schedule policies, let’s explore Nova’s functionalities categorized into Day Zero (planning and deployment) and Day Two (ongoing management) ope rations.

1. Day Zero Operations:

- Static Scheduling: Similar to CI/CD pipelines, static scheduling directs workloads to specific clusters based on development phases (e.g., Dev, Staging, Production).

- Dynamic Scheduling: Leverages information about cluster resource availability (CPU, memory, GPU) to automatically place workloads.

- Day Two Operations:

- Workload Migration: Nova can migrate workloads between clusters based on changing resource needs or disaster recovery scenarios.

- Just-in-Time Clusters: Nova can provision clusters on-demand to meet temporary workload requirements.

- Disaster Recovery Automation: We’ll discuss this crucial feature in detail later.

The Importance of Dynamic Scheduling

Here are three common use cases that highlight the importance of dynamic scheduling:

- Dynamic Cluster Selection for Development: Distributing workloads across multiple development clusters with sufficient capacity without manual intervention. Nova’s capacity-based scheduling automates this process.

- Workload Scaling: When workload requirements change (e.g., scaling ML training jobs), Nova can automatically migrate them to accommodate the increased resource needs.

- Selective Staging Deployments: Moving data from staging to production while excluding overloaded clusters. Nova’s schedule policies allow you to specify inclusion and exclusion lists for targeted deployments.

Understanding Schedule Policies

Schedule policies are custom Kubernetes resources that act as blueprints for workload placement within Nova. These policies essentially answer two key questions

Which resources need to be placed? You can leverage either a namespace selector or a label selector to identify the resources in your Git repository that require placement.

- Label Selector: This selector targets resources based on the labels they possess. For instance, a label selector with the value “n_guestbook” would select all resources associated with your guestbook application, including deployments, jobs, service accounts, and role bindings.

- Namespace Selector (Optional): Namespaces group related resources together. While not used in the simplified example, a namespace selector can further refine the resource selection within Nova.

Which cluster is suitable for placement? The cluster selector in a schedule policy dictates which clusters are eligible to host the chosen resources. In the example, the cluster selector targets clusters with the label “env: staging,” ensuring the guestbook application is deployed to staging environments.

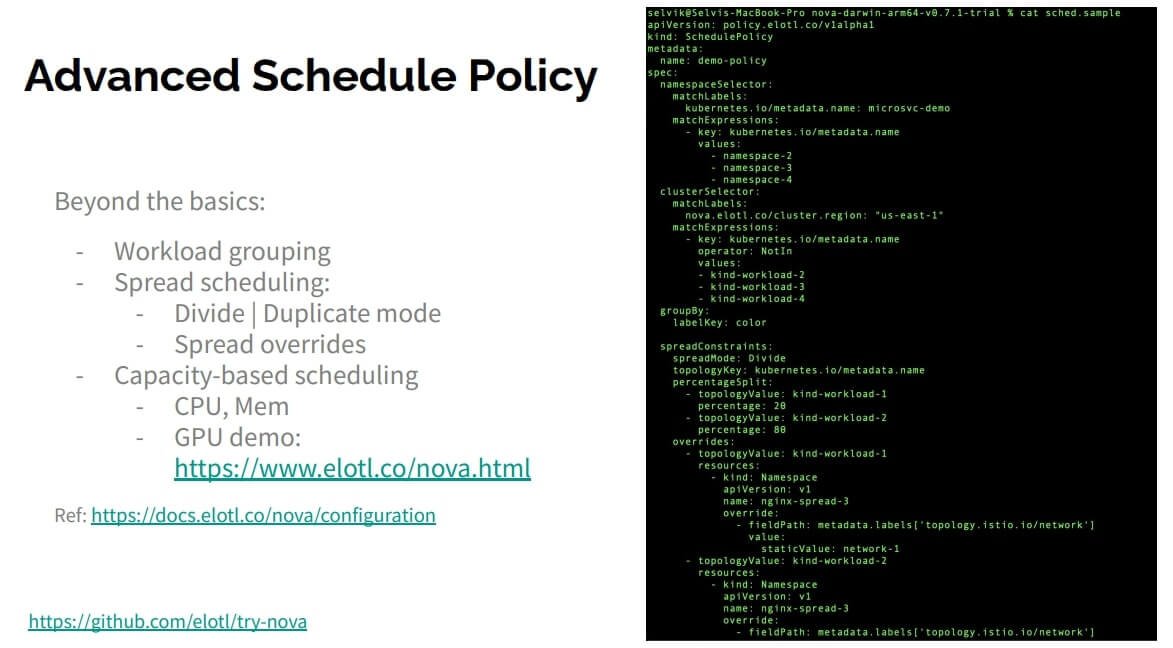

Advanced Scheduling Features

Nova offers additional scheduling functionalities beyond the basic selection criteria. For example, spread scheduling helps standardize workload clusters across development environments. This feature replicates specific secrets and namespaces across all your Dev clusters, eliminating the need to maintain multiple versions in your Git repository. This proves particularly beneficial for replicating standard workloads like logging or monitoring stacks across multiple clusters.

GPU resources are another exciting aspect of Nova’s scheduling capabilities. It allows for sophisticated workload placement based on GPU availability across your clusters. While a detailed example is provided, here are some key takeaways:

- Spread Placement with Overrides: When distributing Stateful Operator (STO) deployments across clusters, Nova’s spread placement ensures an even distribution. However, some infrastructure components might require slight namespace modifications. Nova’s override functionality allows you to modify specific fields within your manifest for different clusters, addressing such scenarios effectively. This exemplifies Day Zero scheduling.

Day Two Operations: Disaster Recovery in Focus

Now, let’s shift our focus to Day Two operations, where disaster recovery takes center stage.

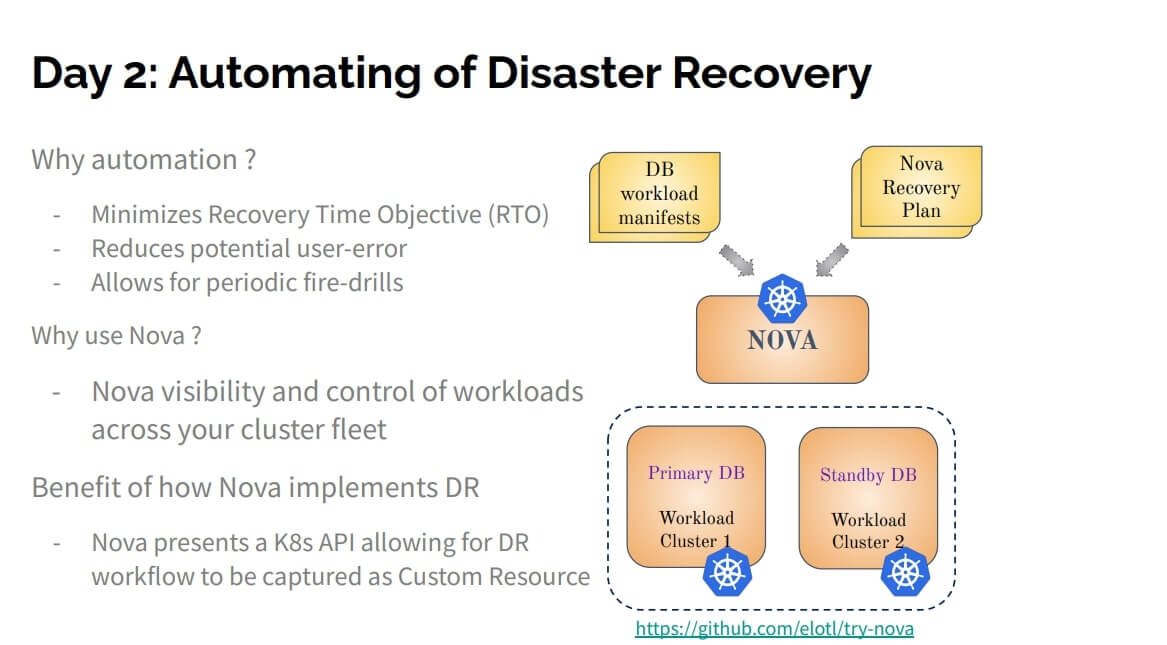

Automating Disaster Recovery with Nova

Why Automate Disaster Recovery?

Disaster recovery (DR) automation offers several compelling benefits:

- Reduced Recovery Time Objective (RTO): By automating DR processes, you minimize the time it takes to restore a system after a failure (RTO). The more automation you implement, the faster your RTO becomes.

- Minimized User Errors: Manual steps or custom scripts in your DR plan increase the risk of human error. Automating disaster recovery in a Kubernetes-native way reduces this risk.

- Improved Disaster Recovery Testing: Automation facilitates regular “fire drills” where you execute your DR plan to ensure its effectiveness. An untested DR plan can become outdated and lead to failures during an actual incident.

Nova: A Strong Candidate for Disaster Recovery Automation

Nova’s unique capabilities make it well-suited for automating disaster recovery:

- Cluster Visibility and Control: Nova has visibility into all your clusters and can make changes within them. This comprehensive control streamlines the automation process.

- Kubernetes-Native Implementation: Nova leverages a custom resource called the Nova recovery plan, ensuring seamless integration with your existing Kubernetes setup.

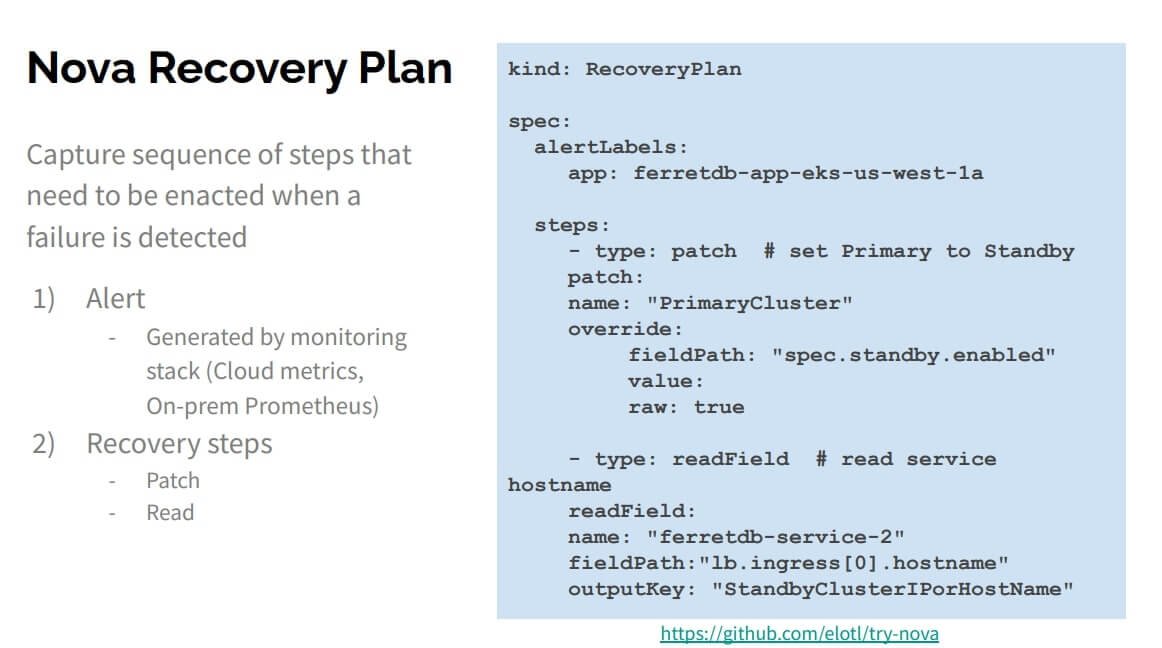

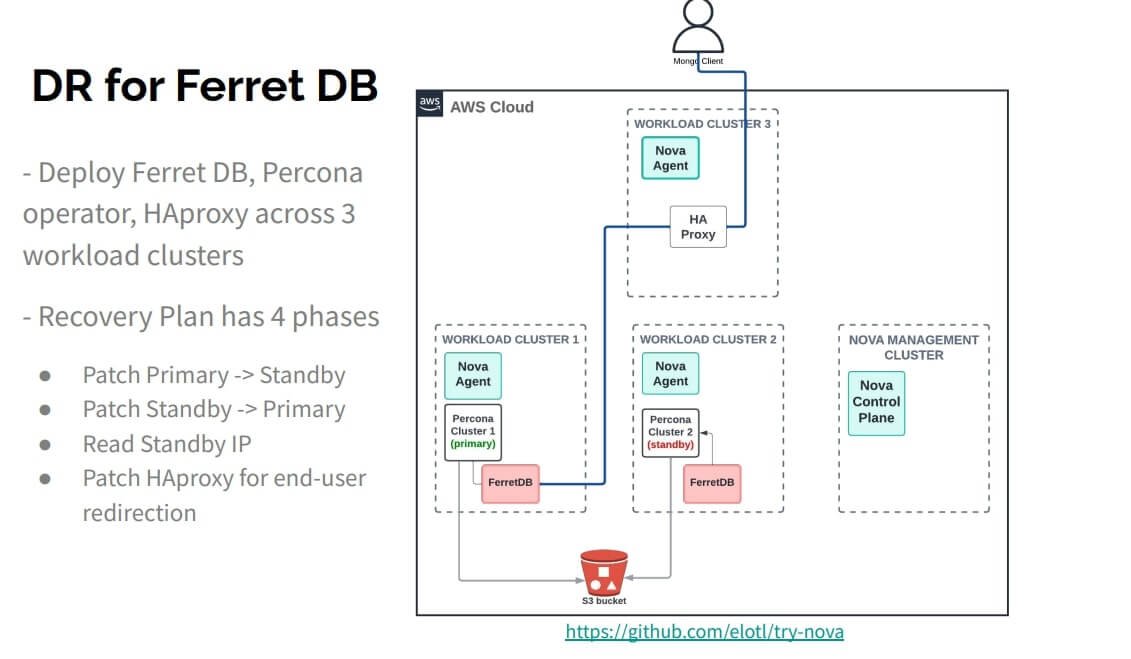

The Nova Recovery Plan Explained

The Nova recovery plan consists of two key elements:

Alert Definition: This unique identifier triggers the database recovery steps. It can be generated by Prometheus metrics or your cloud provider’s monitoring system. The blog post highlights Prometheus due to its widespread adoption.

Recovery Plan Steps: These are a sequence of actions categorized as “patch” and “read” steps:

- Patch Steps: Modify existing cluster manifests. For instance, a patch step could switch the primary and standby document databases.

- Read Steps: Gather information from the clusters. An example might be reading the standby database’s service endpoint to reconfigure a load balancer.

Understanding the Demo Scenario:

The upcoming live demo (covered in part two of this blog series) will showcase a disaster recovery scenario with four clusters:

- Nova Management Cluster: Houses the Nova control plane.

- Workload Clusters 1 & 2: These clusters run your workloads and communicate with the Nova control plane through Nova agents.

- Database Cluster: This cluster utilizes FerretDB as the document database and Percona’s Postgres operator as its backend. Both interact with an S3 bucket for storage.

- HAProxy Cluster: This cluster serves as the load balancer, with users directing their traffic to it.

The recovery plan has four phases:

- Patch the primary database to become standby.

- Patch the standby database to become primary.

- Read the standby database’s IP address.

- Reconfigure the HAProxy to point to the new primary database.

Conclusion

In this blog post, we’ve explored the compelling case for automating disaster recovery for document databases within Kubernetes environments. Selvi Kadirvel, Tech Lead Engineering Manager at Elotl, introduced us to Nova, a powerful multi-cluster orchestrator that simplifies this process. We learned about Nova’s unique capabilities, including its comprehensive cluster visibility and control, and its seamless integration with Kubernetes through custom resources like the Nova recovery plan.

For a practical demonstration of Nova’s disaster recovery features, head over to part two of this blog series! Maciek Urbanski, a Platform Engineer at Elotl, will guide you through a live demo showcasing how Nova automates the recovery process for a document database cluster.

We extend our heartfelt gratitude to the speakers Selvi Kadirvel and Maciek Urbanski for their insightful contributions to this discussion. Watch the full webinar here. Join us, Document Database Community on Slack, and share your comments below.

Related posts

Back to the future: Scaling infrastructure in a modern cloud world

How about more insights? Check out the video on this topic.In the ever-evolving landscape of cloud computing, the challenges of scaling infrastructure have taken on new dimensions....

The importance of interoperability and compatibility in database systems

How about more insights? Check out the video on this topic.The cloud has revolutionized how we store and access data. However, with a growing number of cloud-based tools and services,...

NoSQL: Why and When to Use It

How about more insights? Check out the video on this topic.Traditional SQL databases have long been the industry standard, but as modern applications demand more flexibility and...

Data Visualization Difficulties in Document Databases

How about more insights? Check out the video on this topic.Document databases have rapidly gained popularity due to their exceptional flexibility and scalability. However, effectively...

Redis Alternatives Compared: What Are Your Options in 2024?

How about more insights? Check out the video on this topic.The recent license change by Redis Ltd. has stirred significant discussion within the tech community, prompting many to seek...

MongoDB Cluster Provisioning in Kubernetes: Deep Dive Demo with Diogo Recharte

Dive into the intricacies of provisioning a MongoDB cluster in Kubernetes with Diogo Recharte. Gain valuable insights and practical tips for seamless deployment and management.

How to provision a MongoDB cluster in Kubernetes: Peter Szczepaniak’s Tips

In this blog post, we’ll dive deeper into Peter’s presentation, exploring the step-by-step process of deploying a MongoDB cluster on Kubernetes along with best practices for success.

Elevating Disaster Recovery With Kubernetes-native Document Databases (part 2)

Explore a deep dive into disaster recovery with Nova in action, showcasing Kubernetes-native document databases. Join Maciek Urbanski for an insightful demo.

JSON performance: PostgreSQL vs MongoDB Comparison

Explore the JSON performance: PostgreSQL vs MongoDB in this comparison. This article summarizes key points, offering a concise comparison of JSON handling in both databases.

Global NoSQL Benchmark Framework: Embracing Real-World Performance

Learn about the Global NoSQL Benchmark Framework and how it embraces real-world performance. Explore insights from Filipe Oliveira, Principal Performance Engineer at Redis.

Subscribe to Updates

Privacy Policy

0 Comments