How about more insights? Check out the video on this topic.

Speaker: Filipe Oliveira, a Principal Performance Engineer at Redis.

In the ever-evolving world of technology, the quest for speed and efficiency leads us to fascinating discussions and discoveries. At a recent meetup of the Document Database Community, Filipe Oliveira, a seasoned Principal Performance Engineer from Redis, took the stage to delve into a topic that resonates with every tech enthusiast: the Global NoSQL Benchmark Framework. Amidst an era where latency is increasingly becoming the new outage, Filipe’s insights on embracing real-world performance through realistically benchmarking world-scale scenarios are more pertinent than ever. With a focus not on boasting but on providing evidence of the importance of various performance factors, Filipe aims to shed light on how Redis sets itself apart in terms of speed and why it matters. As we navigate through market expectations and the intricacies of a globally distributed benchmark, join us in uncovering the realities of performance analysis and what it signifies for the future of technology.

Agenda:

Latency: The New Outage

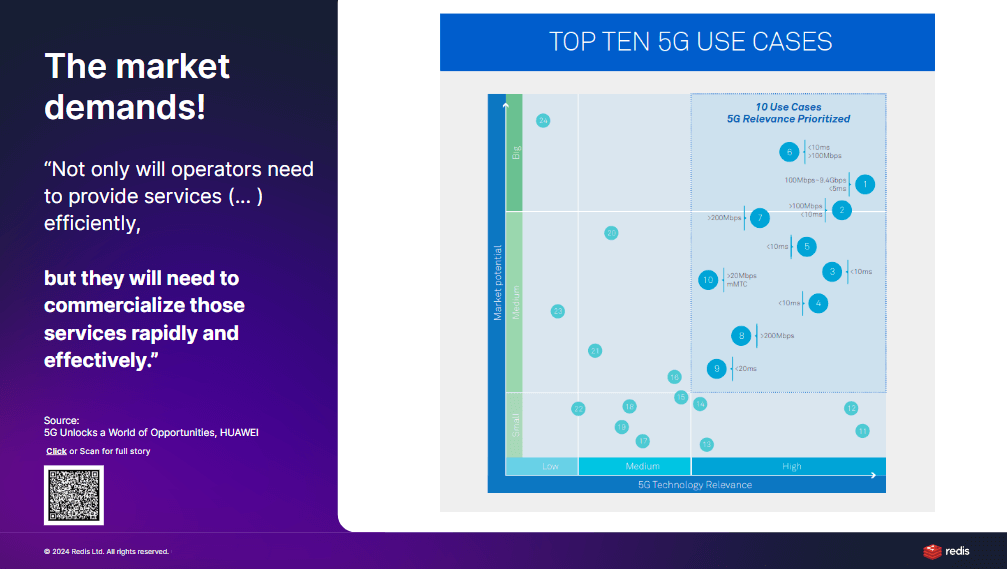

In the digital age, where milliseconds can determine the success or failure of technology, latency has emerged as a pivotal concern, often regarded as the “new outage.” Filipe begins by addressing the significance of latency, questioning its impact both in the short term and over the long horizon. He introduces compelling data, including a groundbreaking study by Huawei, which introduces a magic quadrant linking market potential to technological relevance with an emphasis on latency. This quadrant isn’t just theoretical; it’s a practical tool for understanding how latency benchmarks directly influence the viability and competitiveness of various applications, from autonomous vehicles and healthcare to virtual reality and smart cities. The message is clear: to lead in a specific domain, achieving, and maintaining optimal latency thresholds is not just beneficial—it’s imperative.

Bridging Technology and Market Expectations

The discourse further navigates through the intricate relationship between technological advancements and market expectations, specifically through the lens of latency. Huawei’s magic quadrant serves as a cornerstone for this analysis, demonstrating that high-value use cases demand stringent latency requirements. For instance, in sectors with both high technological relevance and market potential, solutions must offer latency below 100 milliseconds—often striving for single-digit figures—to remain competitive and effective. This principle is not abstract but a quantifiable target that varies significantly across different applications, highlighting the tailored approach needed to meet these benchmarks.

Healthcare: A Case Study in Latency Impact

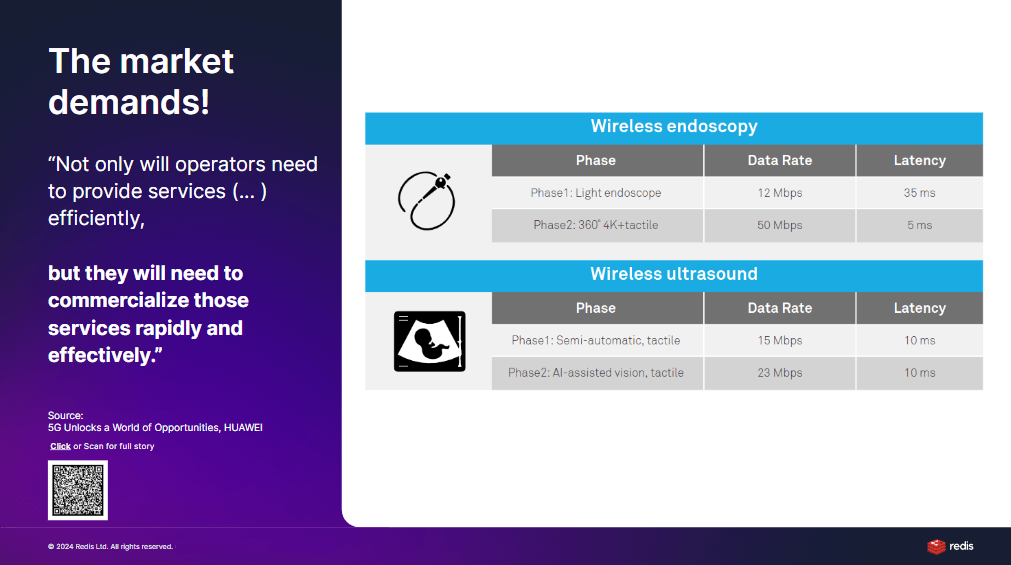

Focusing on healthcare, Filipe elucidates how latency is not just a technical metric but a critical factor that can enhance or hinder life-saving technologies. Through Huawei’s analysis, two phases of technological deployment in healthcare are examined: short-term and mid-term. In the short term, technologies like wireless endoscopy must achieve latency well below 35 milliseconds to be considered viable. However, the goalposts move even closer in the mid-term, with expectations plummeting to below 5 milliseconds for such applications to truly deliver on their promise. This steep decline in acceptable latency illustrates the rapid pace at which technology and healthcare needs are evolving, underscoring the necessity for continuous innovation and performance optimization in the digital health space.

Each of these sections demonstrates Filipe’s commitment to diving deep into the nuances of latency and its ramifications across different sectors. By leveraging real-world examples and data, he provides a comprehensive overview of why and how technology developers and companies must prioritize latency to meet the growing demands of the modern market and ensure the practical utility of their innovations.

Embracing Ultra-Low Latency in Modern Medicine

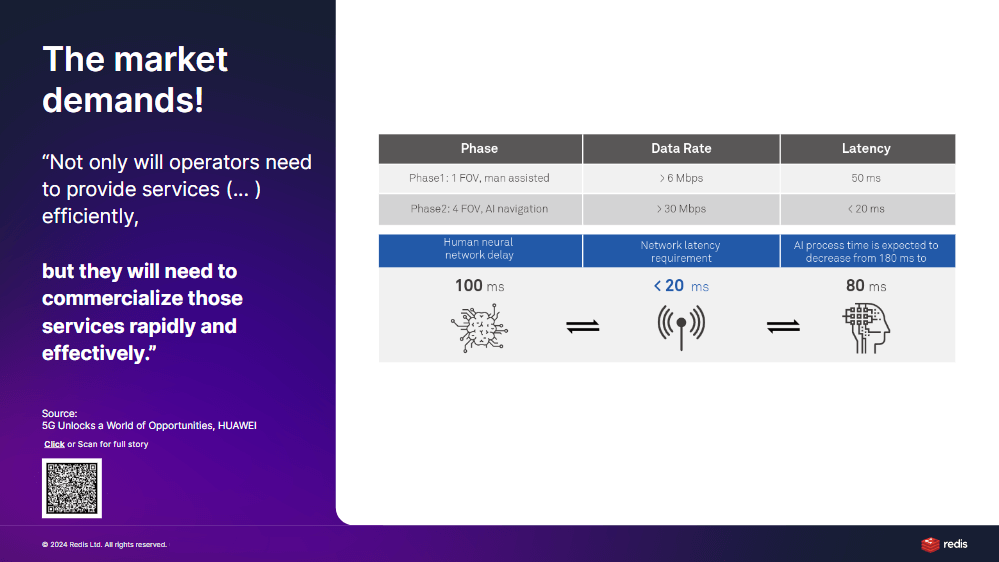

As we navigate through the complexities of modern technological demands, the conversation shifts towards not just achieving but sustaining ultra-low latency alongside increased network bandwidth. This balance is particularly vital in fields like modern medicine, where the volume of data transferred is colossal, yet the expectation for instantaneous response remains non-negotiable. Filipe extends this dialogue to the realm of artificial intelligence (AI), where the expectations around response times are stringent. Initially, end-to-end network responses, encompassing both processing and network latencies, are expected to fall below 50 milliseconds to mirror real-time interactions from a human perspective, ideally not exceeding 100 milliseconds. As technology advances, the target tightens further to 20 milliseconds, rendering response times of even a few hundred milliseconds obsolete and potentially relegating applications to irrelevance if they cannot keep pace.

Competitive Advantage Through Speed and Reliability

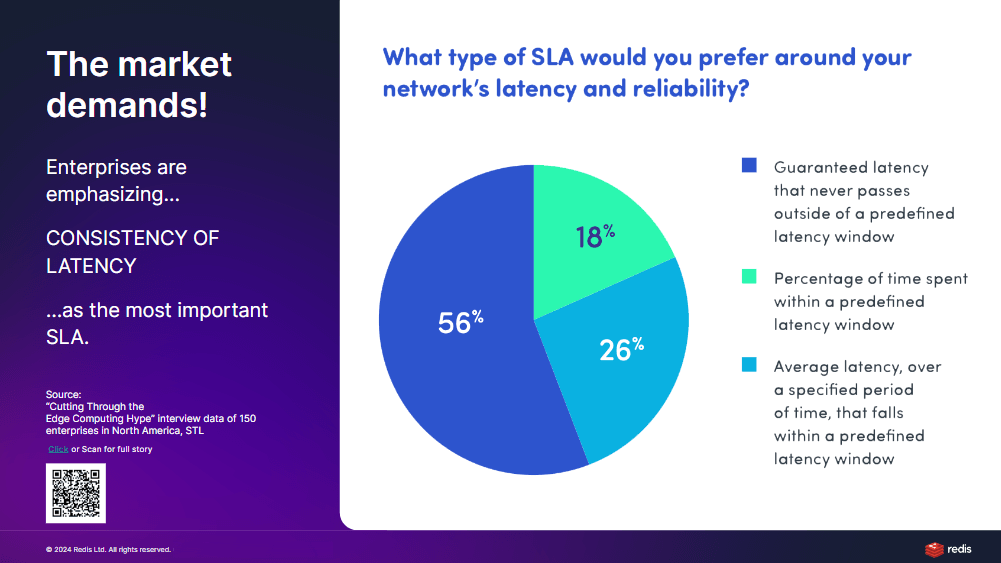

This relentless pursuit of speed is underpinned by competitive advantage—the quicker the response time relative to competitors, the greater the market relevance. This assertion is supported by market studies conducted by Filipe and his team, which highlight not just the desire for speed but for consistency and reliability in performance. The focus on long-tail latencies, such as the 99th percentile (P99) or the 95th percentile (P95), is particularly telling. These metrics reveal the stability of a system under load, where average latencies might mask significant delays experienced by a subset of users.

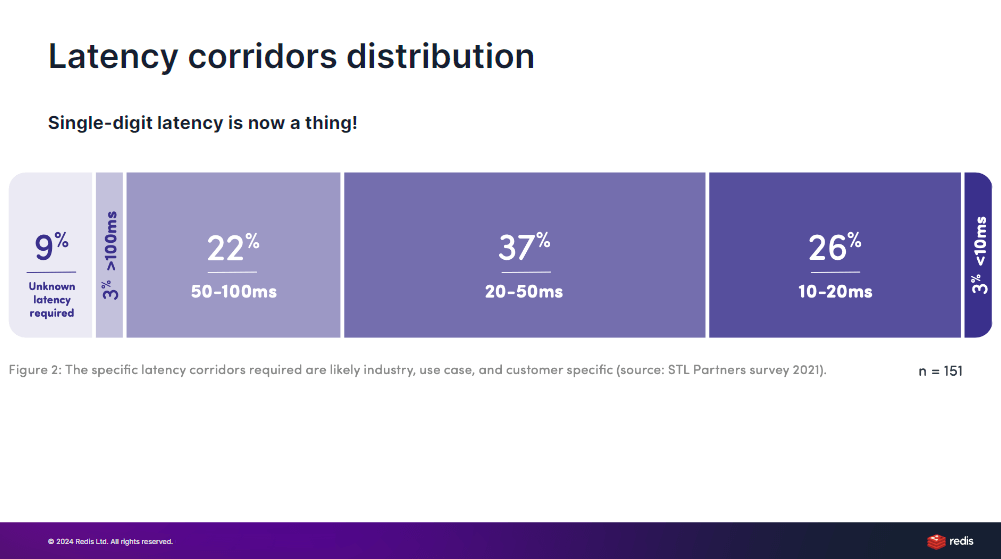

The Quest for Latency Reliability and Stability

The discourse on latency thus evolves from mere numerical targets to the reliability and stability of latency SLAs (Service Level Agreements). Industry inquiries involving over a hundred large U.S. companies have underscored a preference for not just low latency, but predictable and reliable low latency. These companies demand assurance of consistent 50-millisecond response times, without the volatility that could compromise service quality or user experience. This nuanced understanding of latency—beyond simple averages to encompass reliability and distribution corridors—challenges developers and engineers to prioritize system stability and reliability as much as speed.

In essence, Filipe’s insights illuminate a complex landscape where achieving low latency is only the beginning. The ultimate goal is to ensure that such performance is reliable, stable, and uniformly distributed, underscoring a comprehensive approach to latency that meets the exacting standards of today’s technology and market demands.

The Imperative of Ultra-Low Latency for Market Relevance

For businesses vying for dominance in various industries, the mandate is clear: achieve ultra-low latency or risk obsolescence. In a detailed study focusing on market and industry demands, it emerges that leaders and challengers in the field now set the bar at below 10 milliseconds response time. This stringent requirement underscores the shift towards real-time, or even ultra-low latency, as a benchmark for technological relevance. As latency thresholds expand, so does a company’s potential to thrive across different sectors. Conversely, failure to meet these benchmarks significantly narrows a business’s scope for success. The essence of these findings is straightforward: speed and reliability are non-negotiable for companies aiming to excel in the fast-paced arena of modern technology applications.

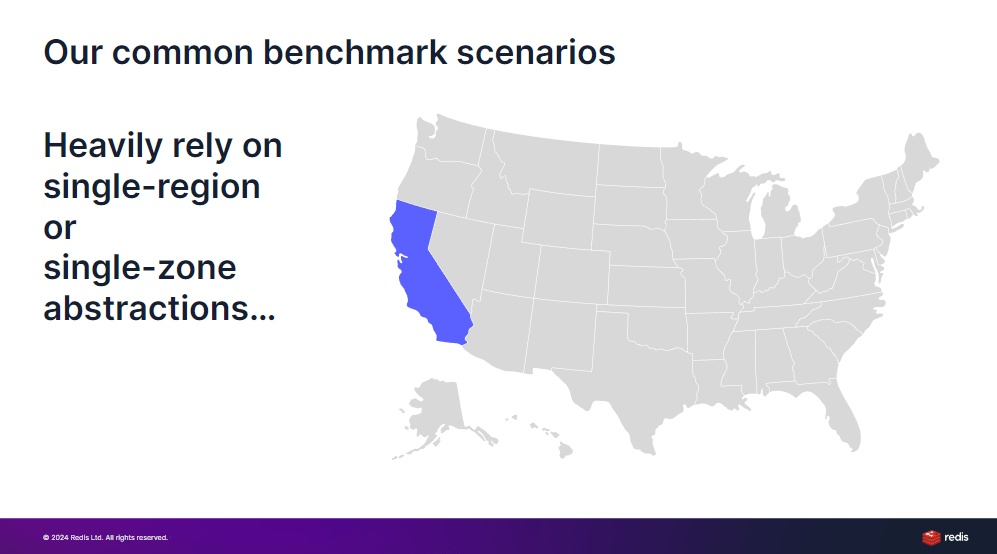

Rethinking Benchmarking for Global Distribution

The traditional approach to benchmarking, with its focus on single regions or zones, falls short in today’s globally interconnected landscape. Filipe challenges this narrow perspective, advocating for a more holistic approach that reflects the global distribution of users and the varied hardware involved in their interactions with digital solutions. By transcending the limitations of single-region benchmarks, the proposed framework aims to address real-world complexities and worst-case scenarios that businesses may encounter. This shift towards multi-region benchmarking is not just about achieving broader coverage; it’s about aligning benchmark practices with the realities of global service delivery and the diverse latency expectations of users worldwide.

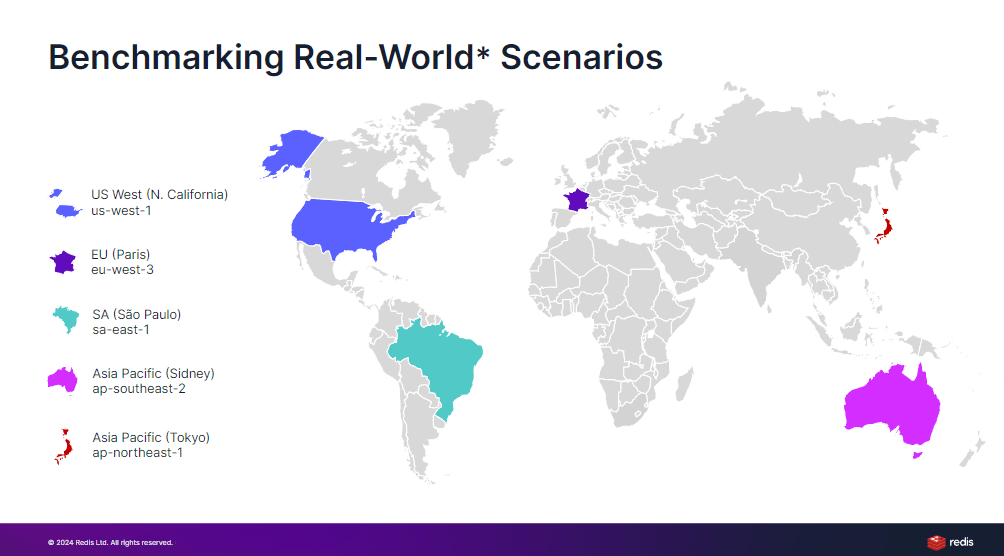

Introducing a Real-World Benchmark Framework

Filipe’s presentation pivots towards a groundbreaking benchmark framework designed to emulate more realistic scenarios by distributing benchmark agents across multiple global regions. While acknowledging the limitations and abstractions inherent in any benchmarking exercise, he emphasizes the importance of this multi-regional approach in capturing a wider array of real-life conditions. By focusing on five strategically chosen regions, the framework seeks to encompass potential worst-case scenarios in communication latency, thus providing a more accurate reflection of global network performance. Filipe’s initiative not only challenges traditional benchmarking methodologies but also opens the door to deeper discussions on the necessity of multi-region benchmarks for achieving a truly representative understanding of global system performance.

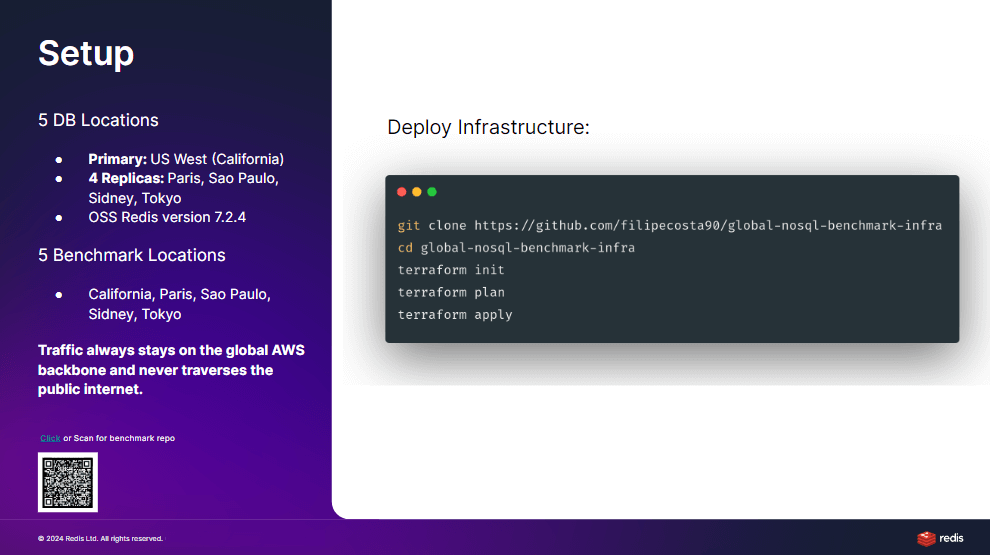

Architecting a Global Benchmarking Strategy

Filipe’s strategic blueprint for benchmarking involves a sophisticated setup encompassing five distinct zones, with the United States serving as the primary zone housing the main database node. This configuration is designed to mirror a multi-regional deployment typical of global applications, where latency and data consistency across geographical distances are critical concerns. Each of the four additional regions is equipped with both client and database nodes, creating a network that simulates real-world interactions between distributed systems. This arrangement facilitates a comprehensive evaluation of performance across diverse scenarios, ensuring that the benchmark reflects the complexities of global data management and access.

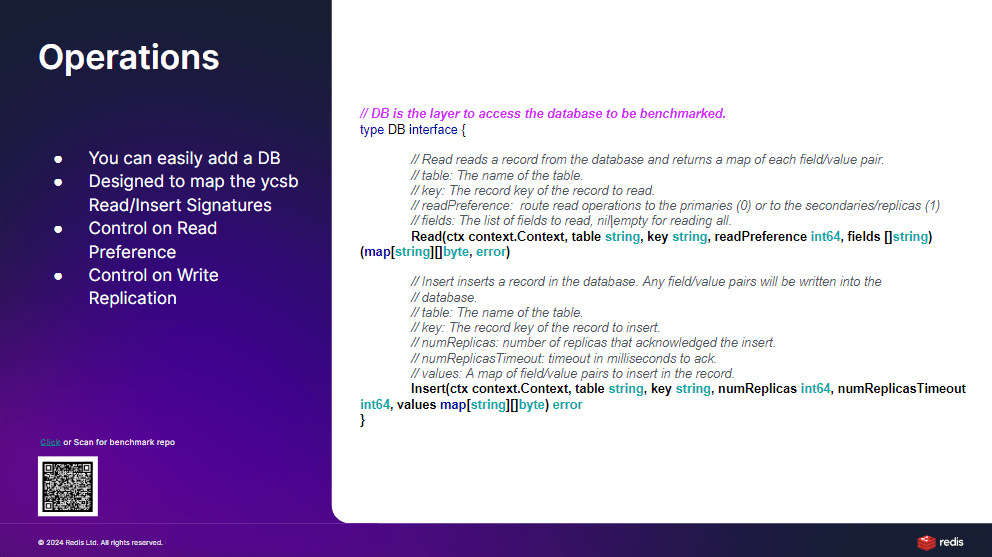

Simplifying Multi-Region Benchmarking

The ambition behind this endeavor is to distill the complexity of multi-region benchmarking into a manageable and understandable framework. By drawing parallels with the established Yahoo! Cloud Serving Benchmark (YCSB) version 1, Filipe seeks to leverage familiar benchmarking paradigms while introducing modifications necessary for a multi-regional context. The adaptation involves an interface closely mirroring YCSB’s, with enhancements tailored to accommodate the nuances of global distribution, such as read preferences and write acknowledgments across multiple regions. This approach aims to provide a balance between the benchmark’s comprehensiveness and its accessibility to those familiar with existing benchmarking standards.

Enhancing Benchmark Flexibility and Realism

Key to this benchmarking framework is its flexibility in simulating various operational scenarios, including reading from primary or replica nodes based on proximity and specifying write acknowledgments across several replicas. This granularity not only increases the realism of the benchmark but also allows for a nuanced assessment of how global distribution affects database performance. By enabling configurations that reflect actual deployment strategies—such as read and write operations that consider geographic location and network latency—Filipe’s framework promises to deliver insights that are both actionable and reflective of real-world application demands.

Benchmark Scenarios: From Global Access to Local Reads

Filipe delineates three distinct cases to illustrate the benchmark’s capabilities in assessing the performance implications of data access strategies across global deployments:

- Global Access to the Primary Node:

In the first scenario, all operations, regardless of the originating region, interact directly with the primary database node located in the US. This setup simulates a centralized data access model, where every read and write operation incurs the latency of cross-regional communication, disregarding the potential benefits of local replicas for read operations.

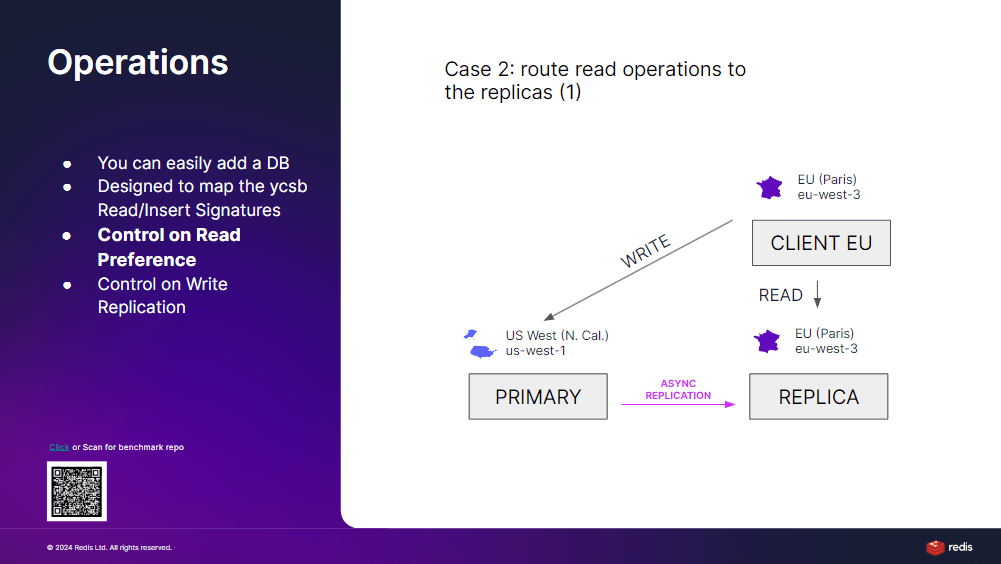

- Local Reads with Potential Stale Data:

The second scenario introduces the concept of local reads from replicas while maintaining global writes to the primary node. This model acknowledges the possibility of reading stale data due to the asynchronous replication from the primary to the replicas. It highlights the trade-off between reducing read latency through local access and the risk of accessing outdated information.

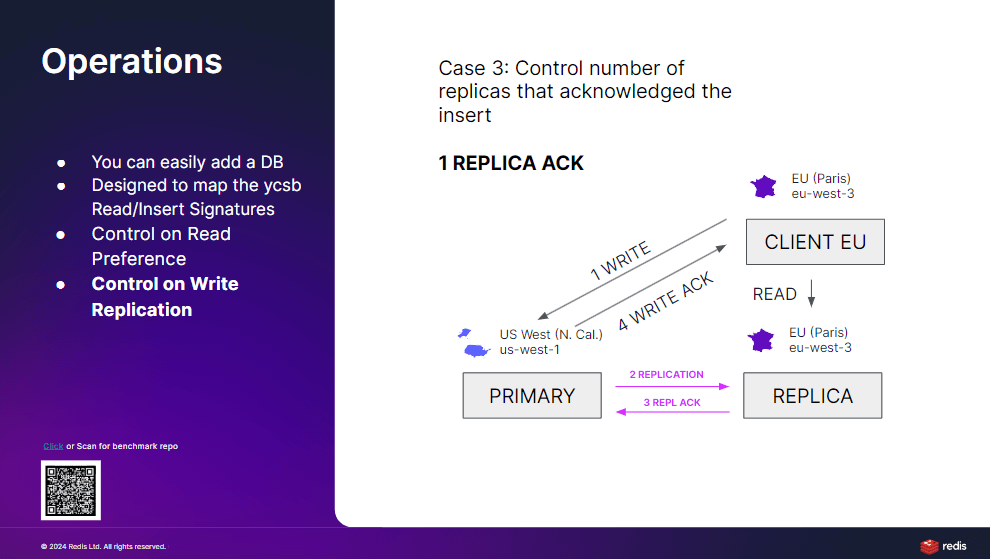

- Varied Acknowledgement Strategies for Writes:

The third case expands on the second by varying the acknowledgment requirements for write operations. This scenario allows for a detailed examination of how the number of acknowledgments affects write latency and the freshness of the data read from replicas. By adjusting the acknowledgment criteria, the benchmark can simulate different levels of data consistency, from eventual consistency (with no acknowledgments) to stronger consistency models (requiring multiple acknowledgments).

The Trade-off Between Consistency and Performance

Through these scenarios, Filipe presents a comprehensive framework for evaluating the delicate balance between ensuring data consistency and optimizing performance in globally distributed applications. The benchmark’s ability to adjust the number of acknowledgments for write operations shines a light on the inherent trade-offs that developers and architects must navigate when designing distributed systems. This approach not only measures the latency implications of various data replication strategies but also provides insights into achieving an optimal balance between ensuring up-to-date data across regions and maintaining responsive application performance.

Streamlining Global Benchmark Deployment

Filipe’s benchmark framework transcends mere theoretical discourse, extending into practical application facilitated by comprehensive tooling and infrastructure readily accessible to the tech community. The creation of two GitHub repositories—one for the benchmark tool and another for the necessary infrastructure—demystifies the process of setting up a global benchmark. By leveraging Terraform and proper credentials, users can replicate Filipe’s multi-regional setup with ease, deploying virtual machines (VMs) across five different regions to host database and client nodes.

Emphasizing the Impact of Network Latency

The core objective behind utilizing open-source databases for this benchmark is not to evaluate the databases’ performance but to underscore the significant impact network latency can have, even when utilizing high-speed solutions. This approach highlights the fundamental issue with benchmarks that are confined to single-region assessments and neglects the complexities of a globally distributed architecture. Filipe’s methodology ensures that all network traffic traverses the backbone, offering a stable and reliable environment that still reveals the intrinsic challenges posed by global deployments.

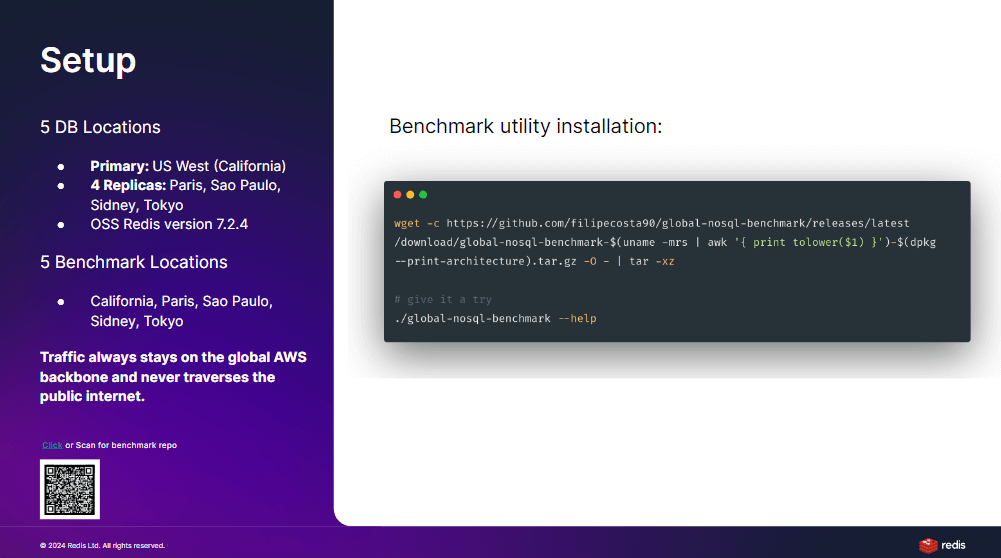

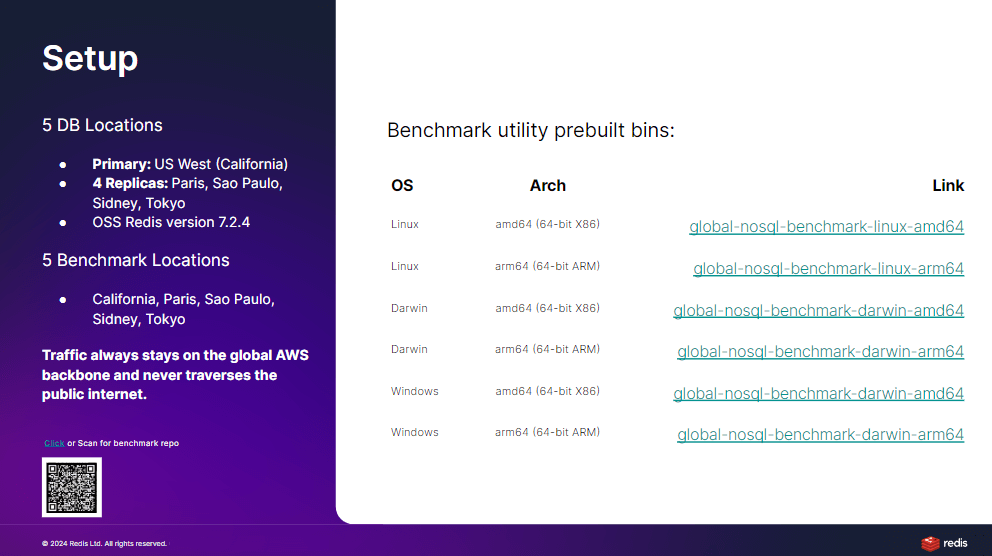

Simplified Benchmark Utility Access and Use

Accessibility and ease of use are critical components of Filipe’s benchmark utility, which is designed to lower the barrier to entry for conducting sophisticated multi-region benchmarks. The utility, hosted on GitHub, requires no Go programming language knowledge for execution, with pre-built binaries available for a wide range of platforms, including Linux, macOS, and Windows, across both x86 and ARM architectures. This accessibility ensures that the benchmark can be easily adopted and executed by a diverse audience, from researchers to practitioners, looking to understand and mitigate the effects of network latency on global systems.

Orchestrating the Benchmark with Distributed Agents

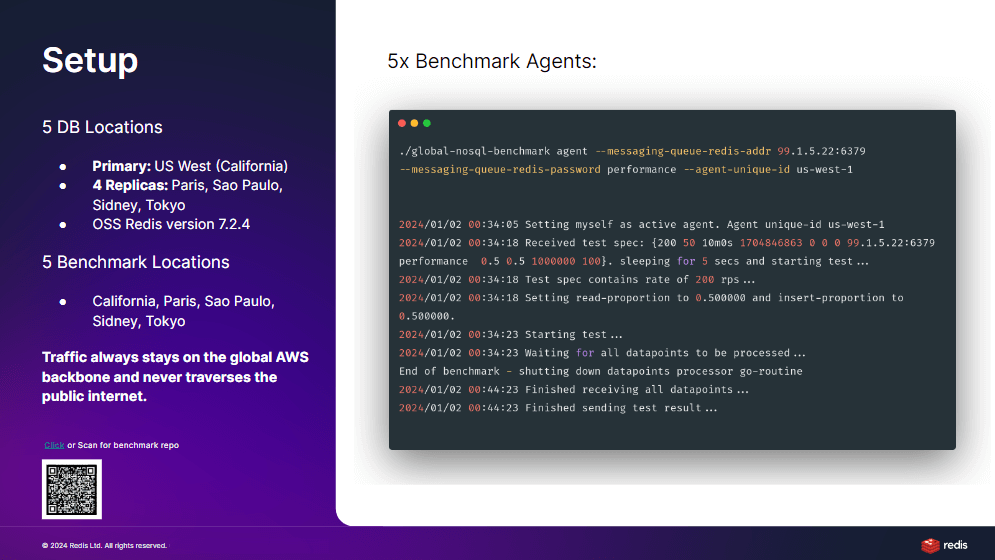

In the architecture of Filipe’s benchmark framework, the deployment strategy employs a network of benchmark agents distributed across five different regions globally. Each of these agents, equipped with a unique identifier corresponding to its geographic location, stands ready to perform assigned tasks. This setup allows for a granular examination of global system performance from various points around the world, simulating real user interactions across diverse network conditions. The simplicity of these agents—they merely await instructions—ensures that the complexity of benchmark execution is centralized, facilitating a streamlined and efficient assessment process.

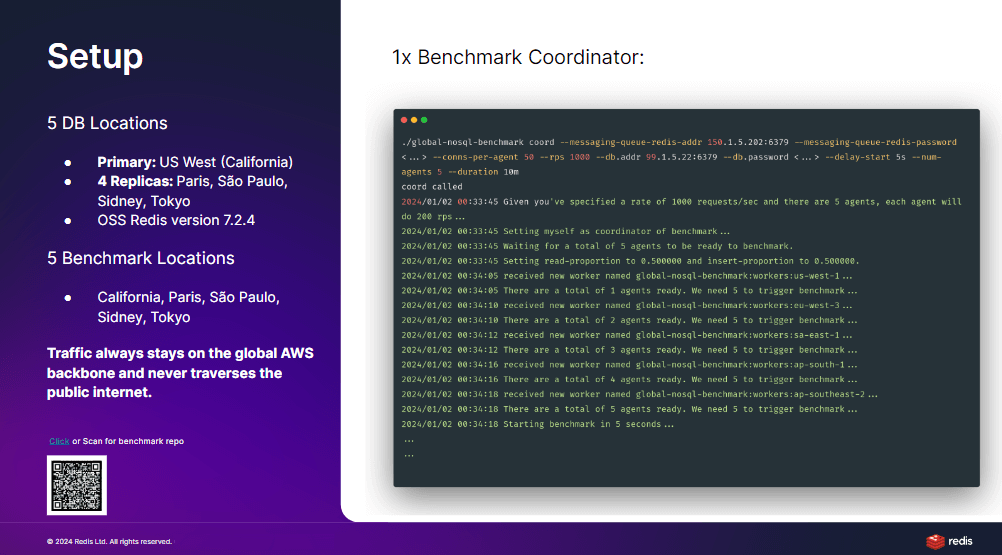

Centralizing Control with a Benchmark Coordinator

The linchpin of this distributed benchmarking system is the benchmark coordinator, a singular entity designed to orchestrate the entire operation. This coordinator is responsible for setting key parameters such as request rate, data payload size, and connection counts per agent, effectively dictating the behavior of the entire benchmark exercise. By centralizing the logic and control, the coordinator ensures that each agent’s efforts are synchronized toward the collective goal of the benchmark, allowing for a coordinated, system-wide performance analysis. The use of a message queue for communication between the coordinator and agents exemplifies a practical approach to managing distributed systems, ensuring timely and organized execution of the benchmarking process.

Streamlining Benchmark Execution

The execution of the benchmark is a testament to the framework’s design efficiency and user-friendly approach. Once the coordinator and agents are configured and launched, the benchmarking process unfolds with remarkable simplicity. Agents signal their readiness to the coordinator, who, upon verifying the preparedness of all nodes, initiates the benchmark based on predefined parameters. This process illustrates not only the technical feasibility of conducting complex, multi-regional benchmarks but also showcases the framework’s capacity to adapt to various testing scenarios, such as comparing local versus remote reads or adjusting the number of write acknowledgments. The ease with which these benchmarks can be deployed and executed underscores the framework’s potential to provide meaningful insights into the performance characteristics of globally distributed systems.

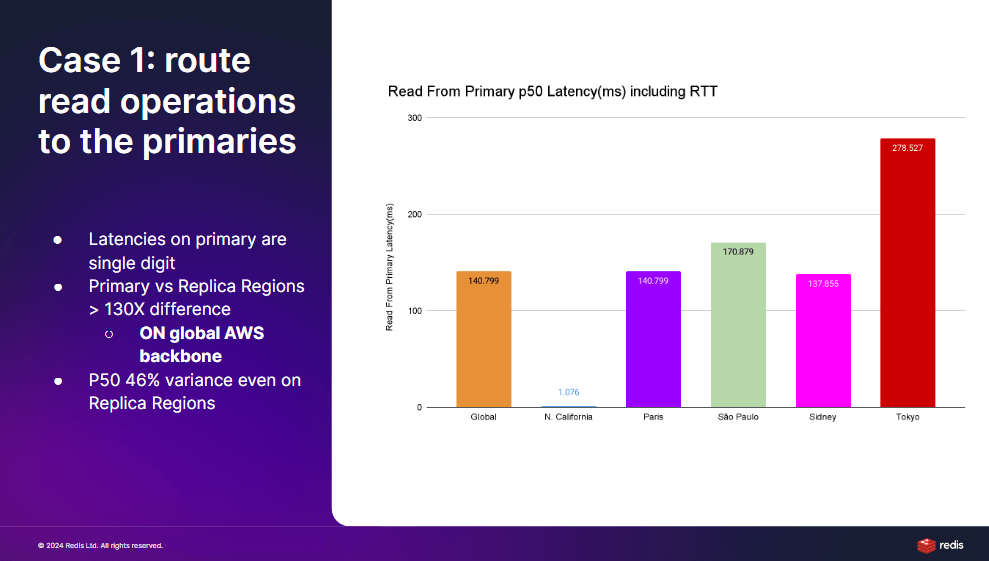

Unveiling the Impact of Global Latency

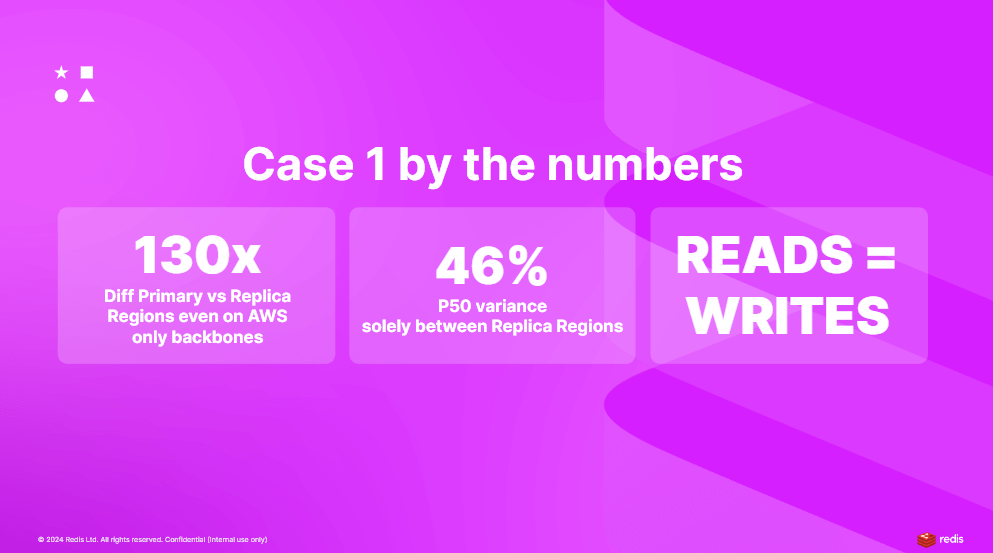

Filipe’s analysis sheds light on the profound impact that global latency has on database operations, especially when benchmarking in a multi-regional context. Case number one, focusing solely on interactions with the primary region without local reads, serves as a baseline for understanding the performance nuances in a global setup. By differentiating latency measurements across various regions—highlighting the stark contrast between local (North California) and remote accesses—Filipe underscores the significant latency disparities inherent in global networks.

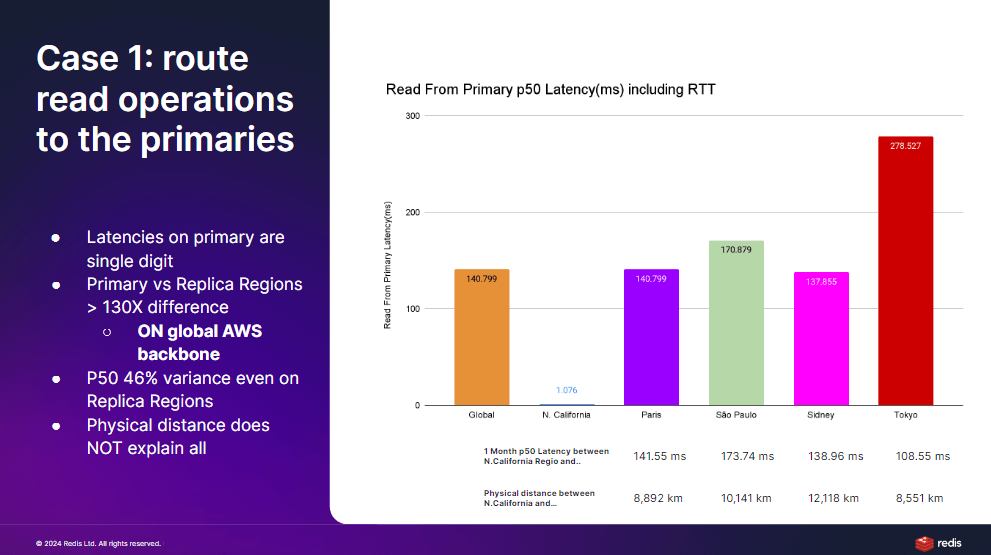

The Latency Variability in Global Networks

The findings reveal an astonishing 135 to 130 times increase in latency variability when transitioning from single-zone to multi-region benchmarks. This discrepancy highlights the limitations of relying solely on single-zone benchmarks for evaluating the performance of globally distributed systems. Interestingly, the physical distance between regions does not linearly correlate with latency, as demonstrated by the comparative analysis of Tokyo and Paris’s latencies despite their similar distances from North California. This observation suggests that other factors, such as the infrastructure or backbone quality, play critical roles in influencing global network performance.

Factors Influencing Global Performance

The analysis further explores the variability in latency across different replica regions, revealing significant inconsistencies even among regions equidistant from the primary. For example, the latency variance among Paris, São Paulo, and Sydney compared to Tokyo highlights the complex dynamics affecting global network performance. These discrepancies underscore the challenges in predicting system behavior based solely on geographic proximity, pointing to the need for a deeper investigation into the underlying network and infrastructure factors.

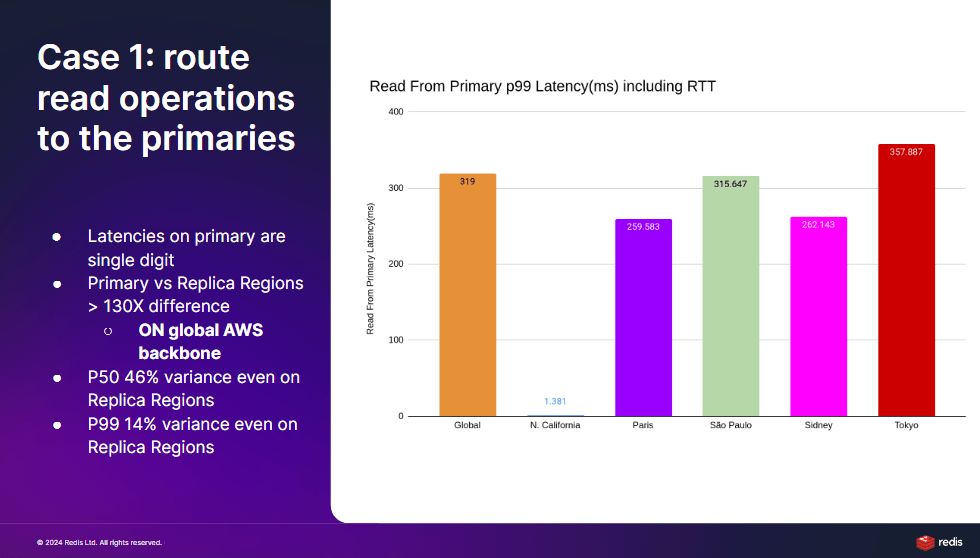

Diving into 99th Percentile Latencies

A closer examination of the 99th percentile latencies unveils even more pronounced differences between the primary and remote regions, albeit with a trend towards stabilization among the replica regions. This observation indicates that while median latencies offer insights into typical system behavior, extreme cases (represented by 99th percentile latencies) reveal the system’s resilience and performance under stress. The convergence of latencies at the 99th percentile across different regions suggests a uniformity in performance degradation, highlighting the global system’s behavior under maximum load conditions.

Implications for Global System Design

Filipe’s comprehensive analysis not only illuminates the significant impact of global latency on distributed systems but also emphasizes the importance of incorporating multi-regional benchmarks into system evaluation practices. By moving beyond simple single-zone benchmarks, developers, and architects can gain a more accurate understanding of their systems’ global performance characteristics. This insight is crucial for designing systems that can deliver consistent, reliable performance across a diverse range of geographic locations, ultimately enhancing user experiences worldwide.

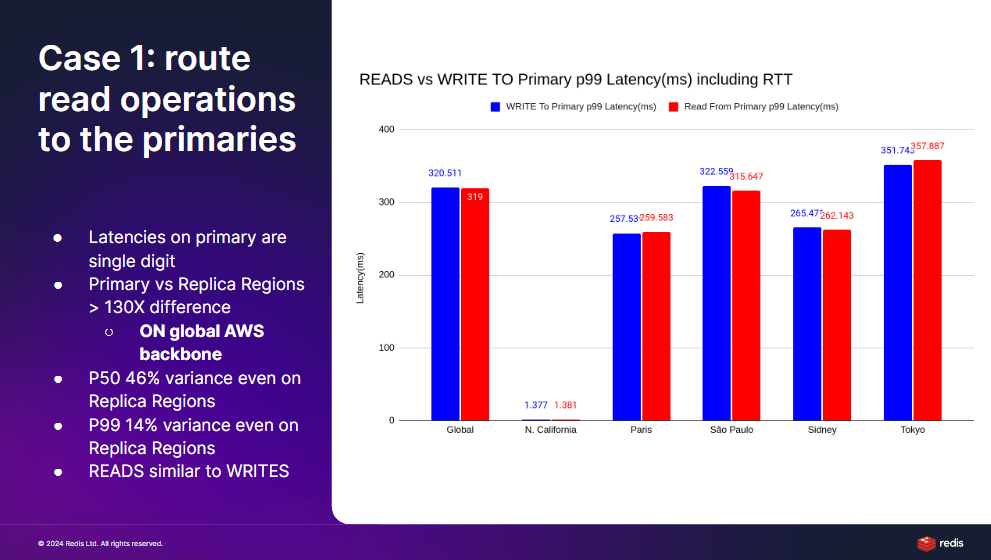

Equivalence of Read and Write Latencies in Global Operations

In the analysis of case one, Filipe brings to light an intriguing observation: the latency for read and write operations across the global network does not significantly differ. This uniformity in latency, regardless of the operation type, underscores a fundamental aspect of distributed systems—when operations are remote, the geographical distance and network quality predominantly dictate performance. Consequently, both read and write operations in remote regions, when compared to the primary region, exhibit similar latency profiles, illustrating the cost of global distribution on system responsiveness.

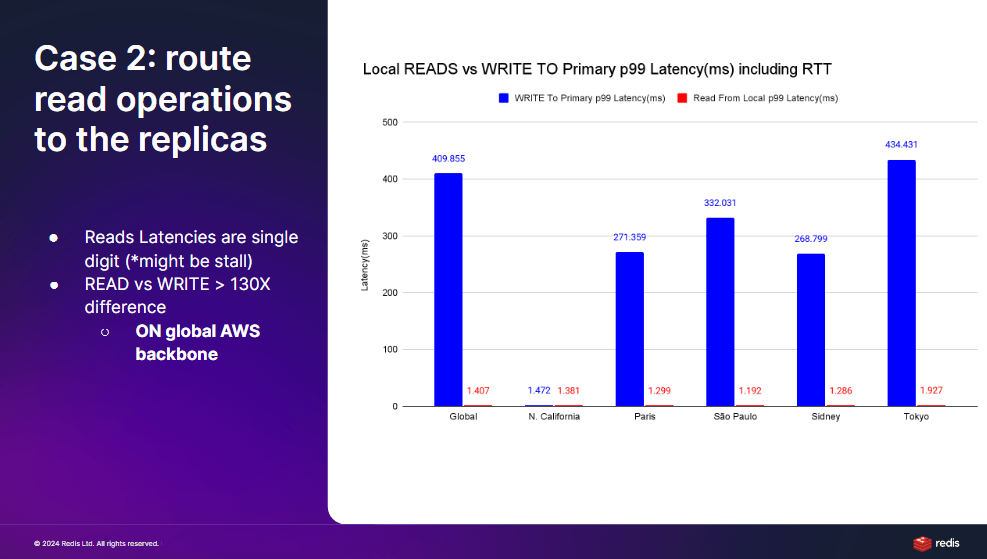

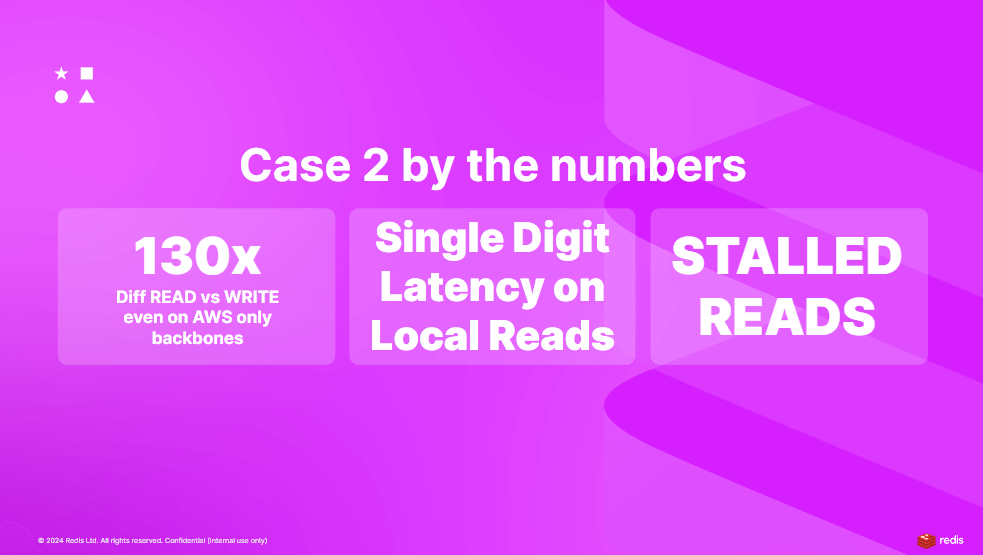

Local Reads Enhance Latency but Introduce Staleness

Transitioning to case two offers a contrasting perspective by localizing read operations while maintaining remote writes. This shift markedly improves read latencies across all regions, aligning them closely with the primary region’s performance metrics. Such an adjustment demonstrates the potential for local reads to drastically reduce latency, offering near-primary performance even in geographically distant regions. However, this improvement introduces the trade-off of data staleness, a factor that, depending on the application’s tolerance for delay in data synchronization, might be acceptable. Filipe’s analysis reveals a strategic option for distributed system design: optimizing for latency through local reads while carefully managing the implications for data freshness and consistency.

The Trade-off Between Latency and Data Freshness

The detailed examination of case two underscores a critical decision point in the architecture of globally distributed systems: the balance between achieving low latency for enhanced user experience and maintaining data consistency across the network. While localizing reads effectively minimizes latency, making global operations more efficient, it necessitates a consideration of the business and technical ramifications of serving potentially outdated information. This scenario highlights the importance of understanding and deliberately designing for the specific needs and tolerance levels of an application about data freshness versus response time.

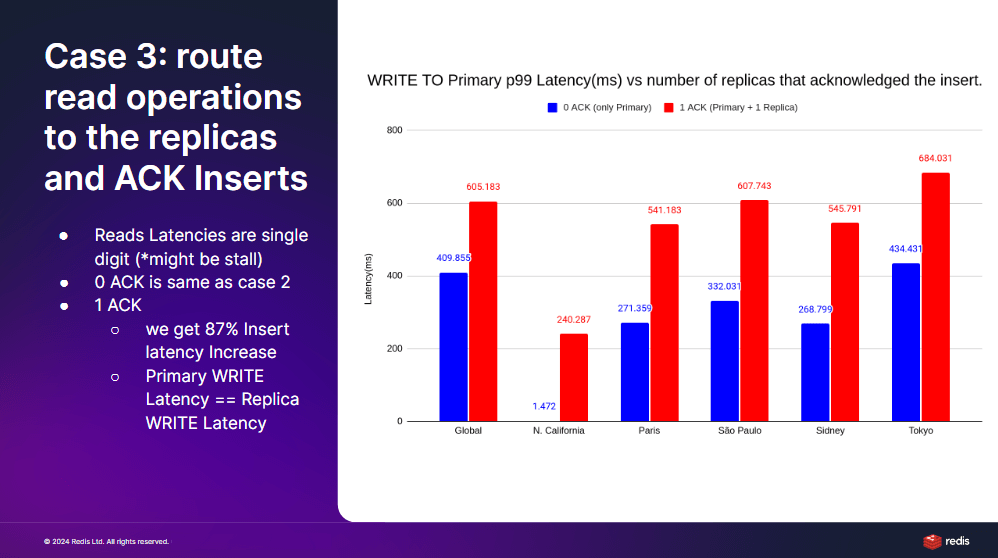

Navigating Through Case Three: Balancing Consistency with Latency

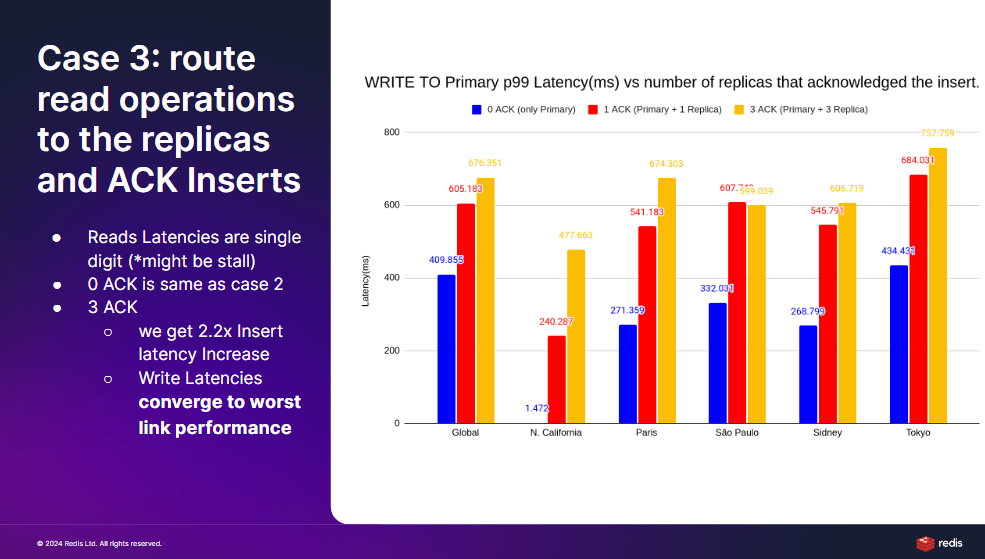

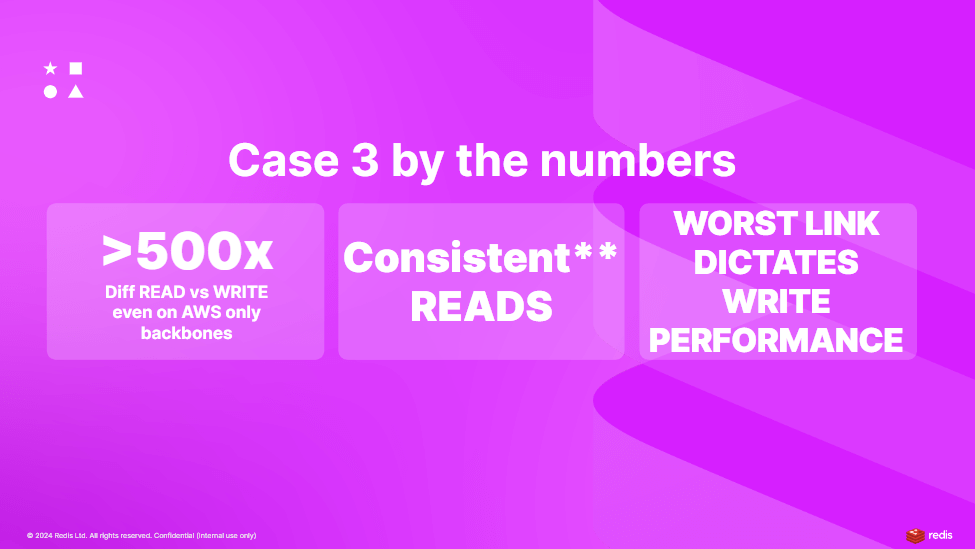

In case three, Filipe delves deeper into the trade-offs between achieving local read efficiencies and maintaining a certain level of consistency for write operations across distributed systems. This scenario introduces a nuanced approach where local reads are preserved for their latency benefits, but writes are subjected to varying degrees of acknowledgment requirements to ensure data consistency across replica nodes. This exploration of acknowledgment variations presents a clear picture of how consistency demands can impact the latency of write operations.

The Impact of Write Acknowledgment on Latency

The initial setup with zero acknowledgments mimics the conditions of case two, focusing solely on the speed of local reads without concern for write confirmations. This changes dramatically as the acknowledgment requirement is introduced, marking a significant shift in how write operations are conducted. Even a single acknowledgment from a replica node—necessitating an additional network round-trip—can profoundly affect write latency. Filipe’s findings highlight an 85% increase in latency across regions, with the primary region experiencing the most significant latency spike. This illustrates the latency implications of ensuring data consistency through acknowledgment from remote replicas.

Exploring Variations in Acknowledgment Requirements

Filipe’s exploration extends to scenarios with one and three acknowledgments for write operations, revealing the direct correlation between the number of acknowledgments and the increase in write latency. This approach underscores the operational costs associated with higher consistency levels in distributed databases. By requiring writes to be acknowledged by multiple replicas before confirming completion to the client, the system ensures greater data consistency at the expense of increased latency. This setup, simulating one local access and multiple remote accesses for each write operation, paints a detailed picture of the latency implications inherent in distributed system designs prioritizing data consistency.

The Direct Connection Between Consistency and Latency

The analysis of case three offers critical insights into the design considerations for distributed systems, especially those requiring stringent data consistency across global deployments. Filipe’s examination reveals a direct relationship between the degree of data consistency and the latency of write operations. As the requirement for acknowledgments from replica nodes increases, so does the latency, aligning write operation performance with the worst-case latency scenario. This finding is crucial for system architects and developers as it highlights the importance of carefully balancing consistency requirements with the expected performance impact on global systems.

Filipe’s exploration into case three reveals a startling increase in latency for write operations as consistency demands escalate. The shift from milliseconds to over 500 milliseconds in latency underscores the significant impact that write acknowledgment requirements can have on system performance. This latency explosion is directly tied to the number of network round-trips necessitated by each write operation to ensure data consistency across distributed replicas. Filipe’s analysis brings to light the critical influence of the slowest region on the overall write performance, providing a vivid illustration of the challenges posed by global system architectures.

Tailoring Benchmarking to Specific Use Cases

The insights derived from Filipe’s comprehensive benchmarking exercise offer valuable guidance for tailoring system architecture and benchmarking strategies to specific application needs. The decision to adopt a global versus single-region benchmarking approach hinges on the user distribution and operational requirements of the product in question. For global products serving a wide user base across multiple regions, understanding the nuances of multi-region latency and consistency becomes paramount. Conversely, for applications predominantly serving users within a single region, the focus may remain on optimizing performance within that confined geographic area.

The Dichotomy of Local and Global Benchmarking

Filipe emphasizes that global and single-region benchmarking serve complementary roles in evaluating system performance. While single-region benchmarks provide insights into localized performance issues, global benchmarks expose the system’s behavior under the complex conditions of international distribution. This dual approach allows developers to gain a holistic understanding of their systems, ensuring that both local optimizations and global scalability concerns are addressed. The intricate balance between read and write operation patterns, consistency requirements, and the geographical distribution of users dictates the strategic focus of benchmarking efforts.

Expanding the Horizon of Global-Scale Benchmarks

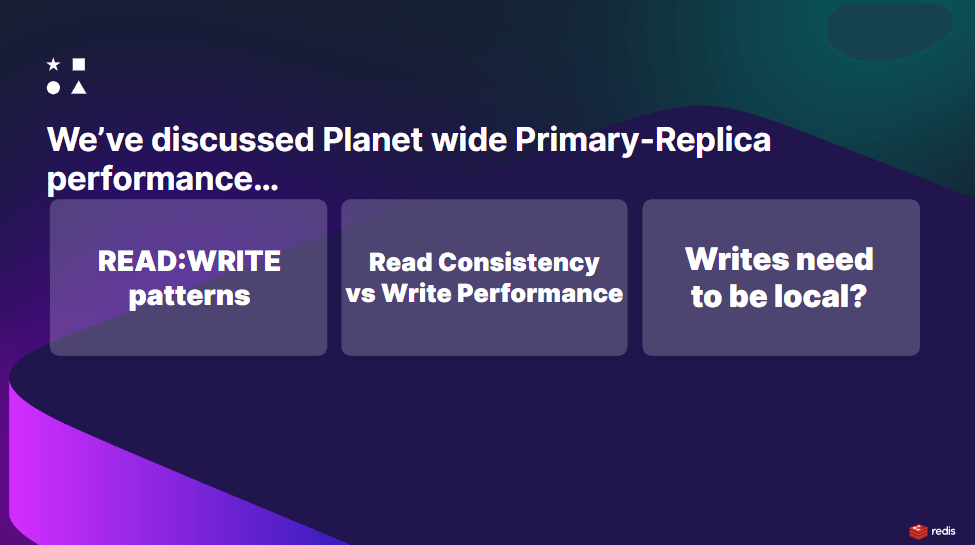

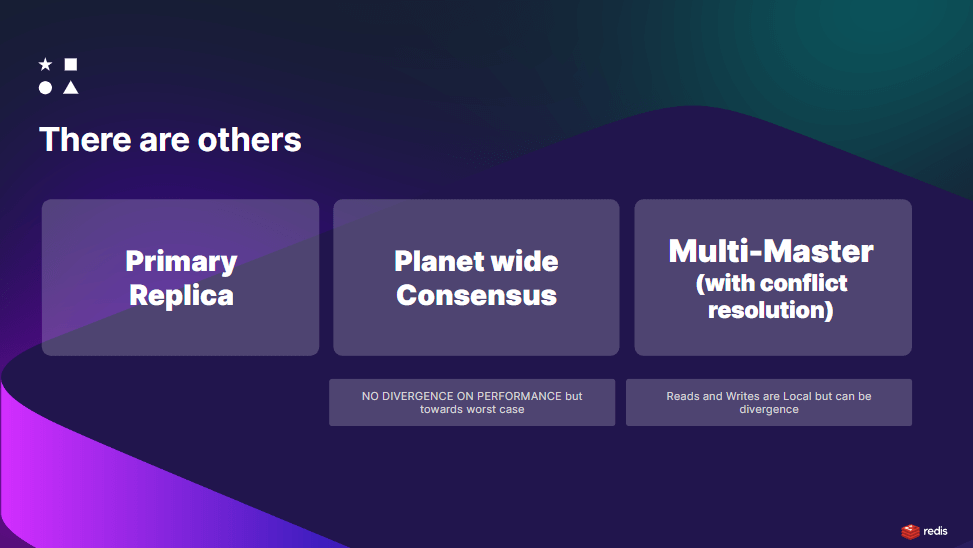

Filipe’s discourse extends beyond the primary replica architecture, introducing additional paradigms for managing data in planet-scale applications. His exploration of global-scale distributed systems uncovers the complexity and trade-offs inherent in striving for optimal performance across vast networks. By examining three distinct approaches—primary-replica, planet-wide consensus, and multi-master solutions—Filipe illuminates the diverse strategies available for achieving varying degrees of consistency and availability.

Delving into Planet-Wide Consensus and Multi-Master Solutions

The planet-wide consensus model seeks to balance the scales between worst-case performance and high consistency, ensuring that data integrity is maintained across the globe at the expense of latency. On the other hand, the multi-master solution represents a paradigm shift towards localizing both reads and writes, with Conflict-Free Replicated Data Types (CRDTs) playing a crucial role in resolving write conflicts. This approach aims to provide the best of both worlds, offering low-latency operations while managing data consistency through innovative replication techniques.

Navigating Trade-offs in Distributed System Design

Filipe’s analysis emphasizes the critical trade-offs between consistency, availability, and performance that designers of world-scale systems must navigate. The example of requiring acknowledgments from all replicas for every write operation illustrates the vulnerability of distributed systems to single points of failure, highlighting the importance of designing with resilience and performance trade-offs in mind. This insight into the primary replica solution’s limitations underscores the need for a nuanced understanding of distributed system architectures and the impact of architectural decisions on system behavior.

Conclusion

In conclusion, Filipe Oliveira’s webinar stands as a testament to the complexity and dynamism of building scalable, efficient, and reliable distributed systems in today’s interconnected world. Attendees were left with a clearer understanding of the pivotal role benchmarking plays in identifying and overcoming the challenges of planet-scale applications. Filipe’s thorough analysis and expert guidance underscore the continuous need for innovation, careful planning, and strategic thinking in the pursuit of global-scale computing excellence. This presentation not only broadened the horizons of its audience but also laid the groundwork for future advancements in distributed computing technologies.

Thank you for being part of this insightful exploration. Should you have any further questions or wish to explore more insights, please don’t hesitate to connect with us on our Document Database Community Slack.

Questions & Answers:

Q: Why was Africa, specifically South Africa, not included in the testing?

A: The absence of South Africa in the tests was due to business-related decisions affecting regional availability within the account used. It was simpler to obtain data points from Asia and Oceania, but the process of including African data points would be straightforward with the proper setup.

Q: What explains the high latency observed in the Tokyo region?

A: The unusually high latency in Tokyo, as compared to other regions, is thought to be related to the backbone’s capacity and not physical distance. Further data and analysis are needed to pinpoint the exact cause, suggesting infrastructure connectivity is a significant factor.

Q: Is the latency issue specific to AWS in Tokyo, or would it differ with another cloud provider like Google Cloud?

A: It’s uncertain if the latency issue is exclusive to AWS. A comparative benchmark with another cloud provider, such as Google Cloud, could provide insights into whether the latency performance in Tokyo is a broader issue or specific to AWS.

Q: Are there any plans to make the benchmarking code more accessible for extension to other databases or cloud providers?

A: While not explicitly addressed, the suggestion to design the benchmarking code for easy extension implies an interest in broadening the scope of future tests to include more databases and cloud providers, enhancing the comprehensiveness of the benchmarks.

Related posts

Back to the future: Scaling infrastructure in a modern cloud world

How about more insights? Check out the video on this topic.In the ever-evolving landscape of cloud computing, the challenges of scaling infrastructure have taken on new dimensions....

The importance of interoperability and compatibility in database systems

How about more insights? Check out the video on this topic.The cloud has revolutionized how we store and access data. However, with a growing number of cloud-based tools and services,...

NoSQL: Why and When to Use It

How about more insights? Check out the video on this topic.Traditional SQL databases have long been the industry standard, but as modern applications demand more flexibility and...

Data Visualization Difficulties in Document Databases

How about more insights? Check out the video on this topic.Document databases have rapidly gained popularity due to their exceptional flexibility and scalability. However, effectively...

Redis Alternatives Compared: What Are Your Options in 2024?

How about more insights? Check out the video on this topic.The recent license change by Redis Ltd. has stirred significant discussion within the tech community, prompting many to seek...

MongoDB Cluster Provisioning in Kubernetes: Deep Dive Demo with Diogo Recharte

Dive into the intricacies of provisioning a MongoDB cluster in Kubernetes with Diogo Recharte. Gain valuable insights and practical tips for seamless deployment and management.

How to provision a MongoDB cluster in Kubernetes: Peter Szczepaniak’s Tips

In this blog post, we’ll dive deeper into Peter’s presentation, exploring the step-by-step process of deploying a MongoDB cluster on Kubernetes along with best practices for success.

Elevating Disaster Recovery With Kubernetes-native Document Databases (part 2)

Explore a deep dive into disaster recovery with Nova in action, showcasing Kubernetes-native document databases. Join Maciek Urbanski for an insightful demo.

Elevating Disaster Recovery With Kubernetes-native Document Databases (part 1)

Learn about automating data recovery in Kubernetes with Nova and elevating disaster recovery with Kubernetes-native document databases with Selvi Kadirvel.

JSON performance: PostgreSQL vs MongoDB Comparison

Explore the JSON performance: PostgreSQL vs MongoDB in this comparison. This article summarizes key points, offering a concise comparison of JSON handling in both databases.

Subscribe to Updates

Privacy Policy

0 Comments